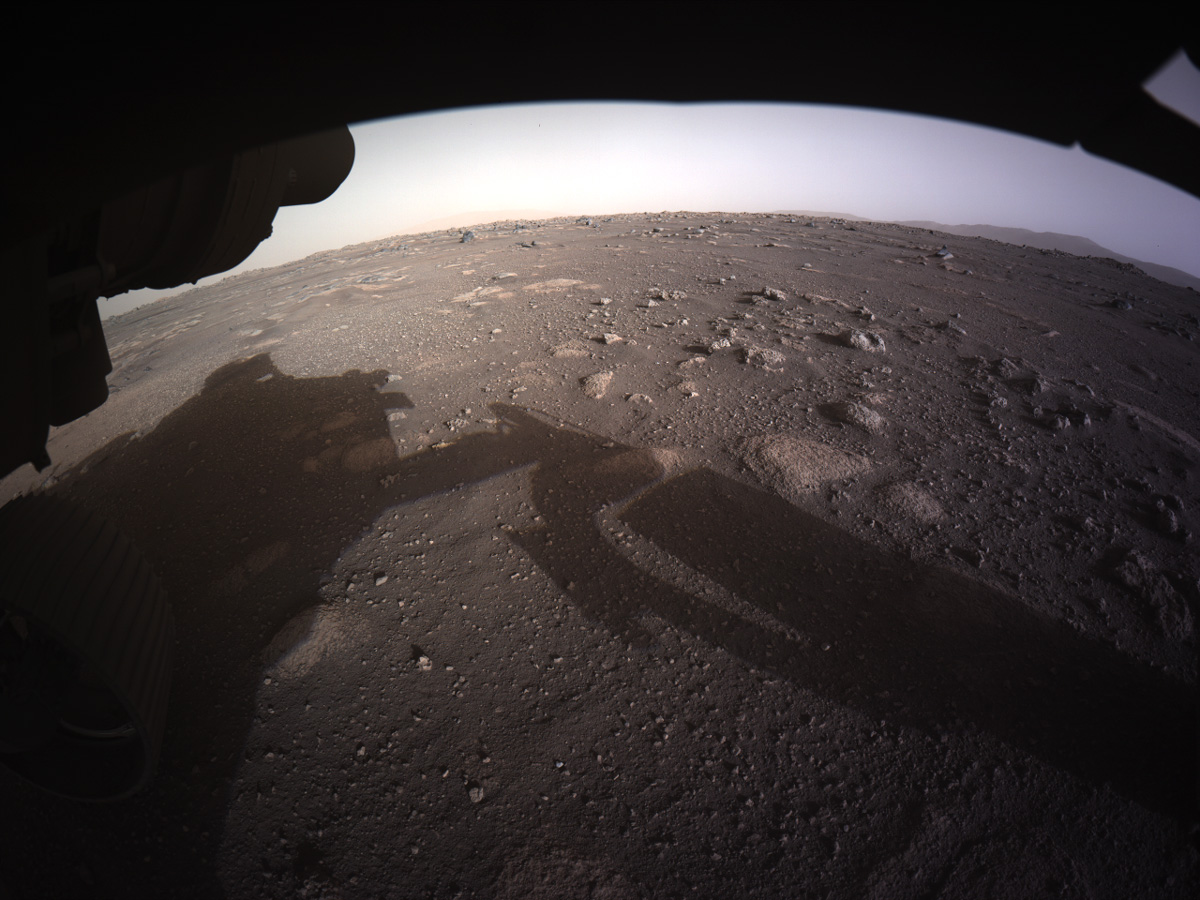

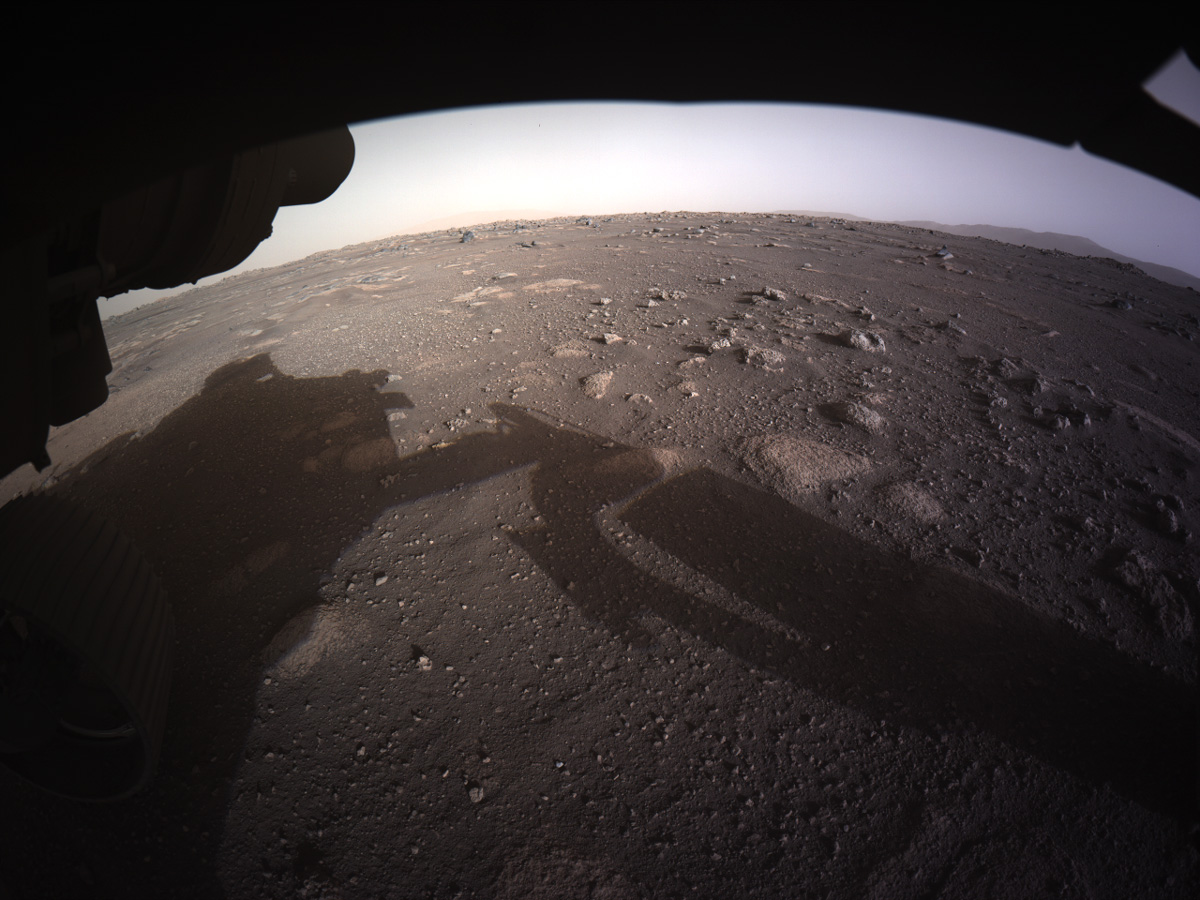

In this lab we will try to feel comfortable with the Expectation Maximization algorithm and its use to fit Gaussian mixture models. To this aim we will start by writing our own EM algorithm for a mixture of univariate Gaussian distributions. We will then complexify our setting by dealing with the multivariate case. We will finally have fun with an application to image segmentation on the first colored picture of Mars sent recently by Perseverance.

Remark: Although we will use the GaussianMixture function of Python for this lab, the current version is not as powerfull as (many) R alternative such as packages mclust or Rmixmod. For instance, such R packages allows for much more covariance structure configurations than the GaussianMixture. If you want to dive seriously into the Gaussian mixture model worlds, these software are top notch!

We consider the following mixture model $$f(y) = \sum_{k=1}^K \tau_k \varphi(y; \mu_k, \sigma^2_k), \qquad k \geq 1,$$ where $\varphi(\cdot, \mu, \sigma^2)$ denotes the p.d.f. of a normal distribution with mean $\mu$ and variance $\sigma^2$ and $\tau_k$ are the cluster membership probabilities, i.e., $\tau_k \geq 0$ and $\sum_{k} \tau_k = 1$.

Write a function that simulates from this mixture model. Play a bit with your brand new function to see how changing the parameters impacts the structure of the observations.

## Code goes here

## %load solutions/question1.py

Write a pseudo code for an EM algorithm on this mixture model and, next, implement it.

## Code goes here

## %load solutions/question2.py

Test your EM algorithm on simulated data and show the evolution of the Gaussian mixture estimates as the number of iterations increases.

## Code goes here

## %load solutions/question3.py

Modify your EM algorithm so that it also outputs the conditional membership probabilities

$$ t_g(y) = \Pr(Z = g \mid Y = y), \qquad y \in \mathcal{Y}, \quad g \in \{1, \ldots, G\},$$and, based on your simulated data, predict the class of each observation using maximum a posteriori.

You may consider plotting the data with colors depending on the estimated component, the fitted mixture density and each individuals gaussian densities.

## Code goes here

## %load solutions/question4.py

Write a function that predict the class of new observations.

## Code goes here

## %load solutions/question5.py

Have a look at the GaussianMixture function of the scikitlearn library and learn how to use it.

Use it on your simulated dataset and compare the output from that of your own function. You may want to:

Are you happy?

## Code goes here

## %load solutions/question6.py

Have a look at the make_blobs function which generate mixture from (very specific) Gaussian distributions. Use this function to generate a sample of size $n=100$ from a Gaussian mixture in $\mathbb{R}^2$ with $G=4$ components.

## Code goes here

## %load solutions/question7.py

Use the GaussianMixture function to estimate a Gaussian mixture model on this sample. Give the mathematical details of the model you fit. Give a plot where you show the prediction class in $[-12, 12]^2$ and another plot where you plot the uncertainties in classification.

## Code goes here

## %load solutions/question8.py

Perform model selection by varying the number of mixture components and the covariance structure. Compare prediction from the top two competitive models.

## Code goes here

## %load solutions/question9.py

Here we will do a small application where we want to perform image segmentation using Gaussian mixture models. Here is a typical roadmap:

## Code goes here

## %load solutions/justForFun.py